Since the dawn of time, people have been using tools to make their life easier. Tools let you solve a problem at hand more quickly and more efficiently, so that you can spend your time doing more interesting things. The stone axe, for example, enabled your prehistoric hunter to cut up meat more efficiently, thus leaving the tribe with time to do much more satisfying activities such as grilling mammoth steaks and painting on cave walls.

Of course, not all tools are equal, and tools have a tendency to evolve. If you go down to your local hardware store nowadays, you can probably find something even better to chop up your firewood or to chop down a tree. In all things, it is important to find the tool that is most appropriate for the job at hand.

The software industry is no exception in this regard. There are thousands of tools out there, and there is a good chance that some of these can help you work more efficiently. If you use them well, they will enable you to work better and smarter, producing higher quality software, and avoiding too much overtime on late software projects. The hardest thing is knowing what tools exist, and how you can put them to good use.

That’s where this book can help. In a nutshell, this book is about software development tools that you can use to make your life easier. And, in particular, tools that can help you optimize your software development life cycle.

The Software Development Life Cycle (or SDLC) is basically the process you follow to produce working software. This naturally involves coding, but there is more to building an application than just cutting code.

You also need a build environment.

When I talk of a build environment, I am referring to everything that contributes to letting the developers get on with their job: coding. This can include things like a version control system (to store your source code) and an issue management system (to keep track of your bugs). It can also include integration, testing, and staging platforms, in which your application will be deployed at different stages. But the build environment also includes tools that make life easier for the developer. For example, build scripts that help you compile, package and deploy your application in a consistent and reproducible manner. Testing tools that make testing a less painful task, and therefore encourage developers to test their code. And much more.

Let’s look at a concrete example. My picture of an efficient, productive build environment goes something along the following lines.

You build your application using a finely tuned build script. Your build script is clean, portable, and maintainable. It runs on any machine, and a new developer can simply check the project out of the version control system and be up and running immediately. It just works.

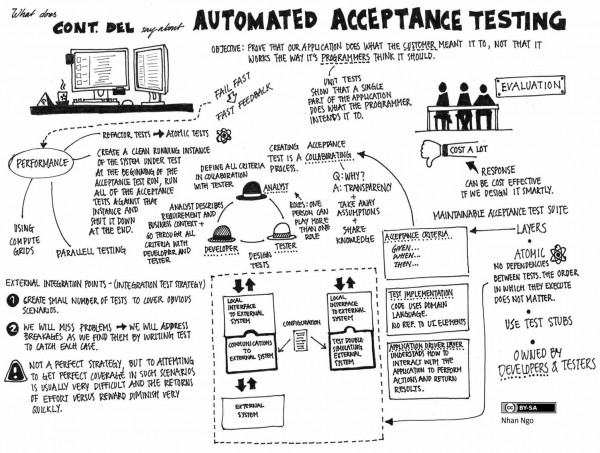

You store your code in a central source code repository. Whenever you commit your code, a build server detects the change to the code base, and automatically runs a full suite of unit, integration, and functional tests. If any problems crop up, you (and the rest of the team) are immediately notified. You have learned from experience that committing small, regular changes makes the integration process go a whole lot smoother, and you organize your work accordingly.

You use an issue tracking system to keep tabs on features to implement, bugs to fix, or any other jobs that need doing. When you commit changes to your source code repository, you mention the issues that this change addresses in the commit message. This message is automatically recorded against these issue in the issue management system. Furthermore, if you say “Fixes #101,” the issue automatically will be closed.

Conversely, when you view an issue in the issue management system, you can also see not only the commit messages but also the exact modifications that were made in the source code. When you prepare a release, it is easy to compile a set of reliable release notes based on the issues that were reported as fixed in the issue tracking system since the last release.

Your team now writes unit tests as a matter of habit. It wasn’t easy to start with, but over time, coaching and peer programming have convinced everyone of the merits of test-driven development. Automatic test coverage tools help them ensure that their unit tests aren’t missing out on any important code. The extensive test suite, combined with the test coverage reports, gives them enough confidence to refactor their code as necessary. This, in turn, helps keep the code at a high level of reliability and flexibility.

Coding standards and best practices are actively encouraged. A battery of automatic code auditing tools checks for any violations of the agreed set of rules. These tools raise issues relating to coding standards as well as potential defects. They also note any code that is not sufficiently documented.

These statistics can be viewed at any time, but, each week, the code audit statistics are reviewed in a special team meeting. The developers can see how they are doing as a team, and how the statistics have changed since last week. This encourages the developers to incorporate coding standards and best practices into their daily work. Because collective code ownership is actively encouraged, and peer-programming is quite frequent, these statistics also helps to foster pride in the code they are writing.

Occasionally, some of the violations are reviewed in more detail during this meeting. This gives people the opportunity to discuss the relevence of such and such a rule in certain circumstances, or to learn about why, and how, this rule should be respected.

You can view up-to-date technical documentation about your project and your application at any time. This documentation is a combination of human-written high-level architecture and design guidelines, and low-level API documentation for your application, including graphical UML class diagrams and database schemas. The automatic code audits help to ensure that the code itself is adequately documented.

The cornerstone of this process is your Continuous Integration, or CI, server. This powerful tool binds the other tools into one coherent, efficient, process. It is this server that monitors your source code repository, and automatically builds and tests your application whenever a new set of changes are committed. The CI server also takes care of automatically running regular code audits and generating the project documentation. It automatically deploys your application to an integration server, for all to see and play around with at any time. It maintains a graphical dashboard, where team members and project sponsors can get a good idea of the general state of health of your appplication at a glance. And it keeps track of builds, making it easier to deploy a specific version into the test, staging, or production environments when the time comes.

None of the tools discussed in this book are the silver bullet that will miraculously solve all your team’s productivity issues. To yield its full benefits, any tool needs to be used properly. And the proper use of many of these tools can entail significant changes in the way you work. For example, to benefit from automated testing, developers must get into the habit of writing unit tests for their code. For a continous integration system to provide maximum benefits, they will need to learn to commit frequent, small changes to the version control system, and to organize their work accordingly. And, if you want to generate half-decent technical documentation automatically, you will need to make sure that your code is well-commented in the first place.

You may need to change the way people work, which is never easy. This can involve training, coaching, peer-programming, mentoring, championing, bribing, menacing, or some combination of the above. But, in the long run, it’s worth it.