Introduction

What Can I Do?

Creating A .htaccess File

What is .htaccess

Why Not to use .htaccess

Error Documents | Custom Error Pages

Blocking users by IP

Blocking users/ sites by referrer

Block traffic from a single referrer

Block traffic from multiple referrers

Blocking bad bots and site rippers

Change your default directory page

Redirects

Prevent viewing of .htaccess file

Adding MIME Types

Preventing hot linking of images and other file types

Preventing Directory Listing

Save bandwidth with .htaccess!

Disable directory listings

Hot link prevention techniques

Protecting your images and (zip) files from linking

Reference

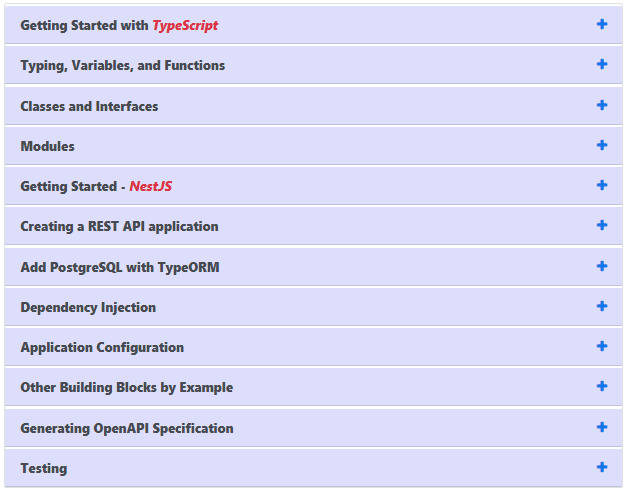

Introduction

In this tutorial you will find out about the .htaccess file and the power it has to improve your website. Although .htaccess is only a file, it can change settings on the servers and allow you to do many different things, the most popular being able to have your own custom 404 error pages. .htaccess isn’t difficult to use and is really just made up of a few simple instructions in a text file.

What Can I Do?

You may be wondering what .htaccess can do, or you may have read about some of its uses but don’t realise how many things you can actually do with it.

There is a huge range of things .htaccess can do including: password protecting folders, redirecting users automatically, custom error pages, changing your file extensions, banning users with certian IP addresses, only allowing users with certain IP addresses, stopping directory listings and using a different file as the index file.

Creating A .htaccess File

Creating a .htaccess file may cause you a few problems. Writing the file is easy, you just need enter the appropriate code into a text editor (like notepad). You may run into problems with saving the file. Because .htaccess is a strange file name (the file actually has no name but a 8 letter file extension) it may not be accepted on certain systems (e.g. Windows 3.1). With most operating systems, though, all you need to do is to save the file by entering the name as:

“.htaccess”

(including the quotes). If this doesn’t work, you will need to name it something else (e.g. htaccess.txt) and then upload it to the server. Once you have uploaded the file you can then rename it using an FTP program.

Warning

Before beginning using .htaccess, I should give you one warning. Although using .htaccess on your server is extremely unlikely to cause you any problems (if something is wrong it simply won’t work), you should be wary if you are using the Microsoft FrontPage Extensions. The FrontPage extensions use the .htaccess file so you should not really edit it to add your own information. If you do want to (this is not recommended, but possible) you should download the .htaccess file from your server first (if it exists) and then add your code to the beginning.

What is .htaccess

.htaccess is a configuration file for use on web servers running the Apache Web Server software. When a .htaccess file is placed in a directory which is in turn ‘loaded via the Apache Web Server’, then the .htaccess file is detected and executed by the Apache Web Server software. These .htaccess files can be used to alter the configuration of the Apache Web Server software to enable/disable additional functionality and features that the Apache Web Server software has to offer. These facilities include basic redirect functionality, for instance if a 404 file not found error occurs, or for more advanced functions such as content password protection or image hot link prevention.

.htaccess files (or “distributed configuration files”) provide a way to make configuration changes on a per-directory basis. A file, containing one or more configuration directives, is placed in a particular document directory, and the directives apply to that directory, and all subdirectories thereof.

Simply put, they are invisible plain text files where one can store server directives. Server directives are anything you might put in an Apache config file (httpd.conf) or even a php.ini**, but unlike those “master” directive files, these .htaccess directives apply only to the folder in which the .htaccess file resides, and all the folders inside.

This ability to plant .htaccess files in any directory of our site allows us to set up a finely-grained tree of server directives, each subfolder inheriting properties from its parent, whilst at the same time adding to, or over-riding certain directives with its own .htaccess file. For instance, you could use .htacces to enable indexes all over your site, and then deny indexing in only certain subdirectories, or deny index listings site-wide, and allow indexing in certain subdirectories. One line in the .htaccess file in your root and your whole site is altered. From here on, I’ll probably refer to the main .htaccess in the root of your website as “the master .htaccess file”, or “main” .htaccess file.

There’s a small performance penalty for all this .htaccess file checking, but not noticeable, and you’ll find most of the time it’s just on and there’s nothing you can do about it anyway, so let’s make the most of it..

Why Not to use .htaccess

There are two main reasons to avoid the use of .htaccess files.

- The first of these is performance. When AllowOverride is set to allow the use of .htaccess files, Apache will look in every directory for .htaccess files. Thus, permitting .htaccess files causes a performance hit, whether or not you actually even use them! Also, the .htaccess file is loaded every time a document is requested.

However, putting this configuration in your server configuration file will result in less of a performance hit, as the configuration is loaded once when Apache starts, rather than every time a file is requested.

- The second consideration is one of security. You are permitting users to modify server configuration, which may result in changes over which you have no control. Carefully consider whether you want to give your users this privilege.

htaccess files must be uploaded as ASCII mode, not BINARY. You may need to CHMOD the htaccess file to 644 or (RW-R–R–). This makes the file usable by the server, but prevents it from being read by a browser, which can seriously compromise your security.

Error Documents | Custom Error Pages

In order to specify your own ErrorDocuments, you need to be slightly familiar with the server returned error codes. (List to the right). You do not need to specify error pages for all of these, in fact you shouldn’t. An ErrorDocument for code 200 would cause an infinite loop, whenever a page was found…this would not be good.

In order to specify your own customized error documents, you simply need to add the following command, on one line, within your htaccess file:

ErrorDocument code /directory/filename.ext

or

ErrorDocument 404 /errors/notfound.html

This would cause any error code resulting in 404 to be forward to yoursite.com/errors/notfound.html

Likewise with:

ErrorDocument 500 /errors/internalerror.html

| Successful Client Requests |

Client Request Redirected |

| 200 |

OK |

300 |

Multiple Choices |

| 201 |

Created |

301 |

Moved Permanently |

| 202 |

Accepted |

302 |

Moved Temporarily |

| 203 |

Non-Authorative Information |

303 |

See Other |

| 204 |

No Content |

304 |

Not Modified |

| 205 |

Reset Content |

305 |

Use Proxy |

| 206 |

Partial Content |

|

|

| Client Request Errors |

Server Errors |

| 400 |

Bad Request |

500 |

Internal Server Error |

| 401 |

Authorization Required |

501 |

Not Implemented |

| 402 |

Payment Required (not used yet) |

502 |

Bad Gateway |

| 403 |

Forbidden |

503 |

Service Unavailable |

| 404 |

Not Found |

504 |

Gateway Timeout |

| 405 |

Method Not Allowed |

505 |

HTTP Version Not Supported |

| 406 |

Not Acceptable (encoding) |

|

|

| 407 |

Proxy Authentication Required |

|

|

| 408 |

Request Timed Out |

|

|

| 409 |

Conflicting Request |

|

|

| 410 |

Gone |

|

|

| 411 |

Content Length Required |

|

|

| 412 |

Precondition Failed |

|

|

| 413 |

Request Entity Too Long |

|

|

| 414 |

Request URI Too Long |

|

|

| 415 |

Unsupported Media Type |

|

|

| |

|

|

|

| |

|

|

|

If you were to use an error document handler for each of the error codes I mentioned, the htaccess file would look like the following (note each command is on its own line):

ErrorDocument 400 /errors/badrequest.html

ErrorDocument 401 /errors/authreqd.html

ErrorDocument 403 /errors/forbid.html

ErrorDocument 404 /errors/notfound.html

ErrorDocument 500 /errors/serverr.html

You can specify a full URL rather than a virtual URL in the ErrorDocument string (http://yoursite.com/errors/notfound.html vs. /errors/notfound.html). But this is not the preferred method by the server’s happiness standards.

You can also specify HTML, believe it or not!

ErrorDocument 401 “<body bgcolor=#ffffff><h1>You have

to actually <b>BE</b> a <a href=”#”>member</A> to view

this page, Colonel!

The only time I use that HTML option is if I am feeling particularly saucy, since you can have so much more control over the error pages when used in conjunction with xSSI or CGI or both. Also note that the ErrorDocument starts with a ” just before the HTML starts, but does not end with one…it shouldn’t end with one and if you do use that option, keep it that way. And again, that should all be on one line, no naughty word wrapping!

Blocking users by IP

Is there a pesky person perpetrating pain upon you? Stalking your site from the vastness of the electron void? Blockem! In your htaccess file, add the following code–changing the IPs to suit your needs–each command on one line each:

order allow,deny

deny from 123.45.6.7

deny from 012.34.5.

allow from allYou can deny access based upon IP address or an IP block. The above blocks access to the site from 123.45.6.7, and from any sub domain under the IP block 012.34.5. (012.34.5.1, 012.34.5.2, 012.34.5.3, etc.) I have yet to find a useful application of this, maybe if there is a site scraping your content you can block them, who knows.

You can also set an option for deny from all, which would of course deny everyone. You can also allow or deny by domain name rather than IP address (allow from .javascriptkit.com works for www.javascriptkit.com or virtual.javascriptkit.com, etc.)

Blocking users/ sites by referrer

Blocking users or sites that originate from a particular domain is another useful trick of .htaccess. Lets say you check your logs one day, and see tons of referrals from a particular site, yet upon inspection you can’t find a single visible link to your site on theirs. The referral isn’t a “legitimate” one, with the site most likely hot linking to certain files on your site such as images,

.css files, or files you can’t even make out. Remember, your logs will generate a referrer entry for any kind of reference to your site that has a traceable origin.

Before I get to the code itself, it’s important to note that blocking access by referrer in .htaccess requires the help of the Apache module mod_rewrite to make out the referrer first. This module is installed by default on most servers (ask your host if you’re not sure). So, to deny access all traffic that originate from a particular domain (referrers) to your site, use the following code:

Block traffic from a single referrer:

RewriteEngine on

# Options +FollowSymlinks

RewriteCond %{HTTP_REFERER} badsite\.com [NC]

RewriteRule .* – [F]

Block traffic from multiple referrers

RewriteEngine on

# Options +FollowSymlinks

RewriteCond %{HTTP_REFERER} badsite\.com [NC,OR]

RewriteCond %{HTTP_REFERER} anotherbadsite\.com

RewriteRule .* – [F]

In the “single referrer” case above, “badsite\.com” is the domain you wish to block. Note the backslash proceeding the period (“.”) to actually donate a period, as in Regular Expressions, a period donates any character, which is not what we want. The flag “[NC]” is added to the end of the domain to make it case insensitive, so whether the domain is “badsite.com”, “Badsite.com” etc, however bad it gets, it gets blocked. Finally, the last line in the .htaccess file specifies that the action to take when a match is found is to fail the request, meaning the referrer traffic will hit a 403 Forbidden error. The only difference between blocking a single referrer and multiple referrers is the modified [NC, OR] flag in the later case to every domain but the last.

Now, you may have noticed the line “Options +FollowSymlinks” above, which is commented. Uncomment this line if your server isn’t configured with FollowSymLinks in its <directory> section in httpd.conf, and you get a 500 Internal Server error when using the code above as is.

Blocking bad bots and site rippers (aka offline browsers)

Below is a useful code block you can insert into.htaccess file for blocking a lot of the known bad bots and site rippers currently out there. It is derived from my reading of the excellent discussion “A close to perfect .htaccess file”, specifically, “A close to perfect .htaccess file II.” Simply add the below code to your .htaccess file:

Refer More…

http://www.webmasterworld.com/forum13/687-1-10.htm

http://www.webmasterworld.com/forum92/205.htm

RewriteEngine On

RewriteCond %{HTTP_USER_AGENT} ^BlackWidow [OR]

RewriteCond %{HTTP_USER_AGENT} ^Bot\ mailto:craftbot@yahoo.com [OR]

RewriteCond %{HTTP_USER_AGENT} ^ChinaClaw [OR]

RewriteCond %{HTTP_USER_AGENT} ^Custo [OR]

RewriteCond %{HTTP_USER_AGENT} ^DISCo [OR]

RewriteCond %{HTTP_USER_AGENT} ^Download\ Demon [OR]

RewriteCond %{HTTP_USER_AGENT} ^eCatch [OR]

RewriteCond %{HTTP_USER_AGENT} ^EirGrabber [OR]

RewriteCond %{HTTP_USER_AGENT} ^EmailSiphon [OR]

RewriteCond %{HTTP_USER_AGENT} ^EmailWolf [OR]

RewriteCond %{HTTP_USER_AGENT} ^Express\ WebPictures [OR]

RewriteCond %{HTTP_USER_AGENT} ^ExtractorPro [OR]

RewriteCond %{HTTP_USER_AGENT} ^EyeNetIE [OR]

Bots that are listed above will all receive a 403 Forbidden error when trying to view your site. The amount of bandwidth savings and decrease in server resource usage as a result may be significant in many cases.

Change your default directory page

Some of you may be wondering, just what in the world is a DirectoryIndex? Well, grasshopper, this is a command which allows you to specify a file that is to be loaded as your default page whenever a directory or url request comes in, that does not specify a specific page. Tired of having yoursite.com/index. html come up when you go to yoursite.com? Want to change it to be yoursite.com/ILikePizzaSteve.html that comes up instead? No problem!

DirectoryIndex filename.html

This would cause filename.html to be treated as your default page, or default directory page. You can also append other filenames to it. You may want to have certain directories use a script as a default page. That’s no problem too!

DirectoryIndex filename.html index.cgi index.pl default.htm

Placing the above command in your htaccess file

will cause this to happen: When a user types in yoursite.com, your site will look for filename.html in your root directory (or any directory if you specify this in the global htaccess), and if it finds it, it will load that page as the default page. If it does not find filename.html, it will then look for index.cgi; if it finds that one, it will load it, if not, it will look for index.pl and the whole process repeats until it finds a file it can use. Basically, the list of files is read from left to right.

Redirects

Ever go through the nightmare of changing significantly portions of your site, then having to deal with the problem of people finding their way from the old pages to the new? It can be nasty. There are different ways of redirecting pages, through http-equiv, javascript or any of the server-side languages. And then you can do it through htaccess, which is probably the most effective, considering the minimal amount of work required to do it.

htaccess uses redirect to look for any request for a specific page (or a non-specific location, though this can cause infinite loops) and if it finds that request, it forwards it to a new page you have specified:

Redirect /olddirectory/oldfile.html http://yoursite.com/newdirectory/newfile.html

Note that there are 3 parts to that, which should all be on one line : the Redirect command, the location of the file/directory you want redirected relative to the root of your site (/olddirectory/oldfile.html = yoursite.com/olddirectory/oldfile.html) and the full URL of the location you want that request sent to. Each of the 3 is separated by a single space, but all on one line. You can also redirect an entire directory by simple using Redirect /olddirectory http://yoursite.com/newdirectory/

Using this method, you can redirect any number of pages no matter what you do to your directory structure. It is the fastest method that is a global affect.

Prevent viewing of .htaccess file

If you use htaccess for password protection, then the location containing all of your password information is plainly available through the htaccess file. If you have set incorrect permissions or if your server is not as secure as it could be, a browser has the potential to view an htaccess file through a standard web interface and thus compromise your site/server. This, of course, would be a bad thing. However, it is possible to prevent an htaccess file from being viewed in this manner:

<Files .htaccess>

order allow,deny

deny from all

</Files>

The first line specifies that the file named .htaccess is having this rule applied to it. You could use this for other purposes as well if you get creative enough.

If you use this in your htaccess file, a person trying to see that file would get returned (under most server configurations) a 403 error code. You can also set permissions for your htaccess file via CHMOD, which would also prevent this from happening, as an added measure of security: 644 or RW-R–R–

Adding MIME Types

What if your server wasn’t set up to deliver certain file types properly? A common occurrence with MP3 or even SWF files. Simple enough to fix:

AddType application/x-shockwave-flash swf

AddType is specifying that you are adding a MIME type. The application string is the actual parameter of the MIME you are adding, and the final little bit is the default extension for the MIME type you just added, in our example this is swf for ShockWave File.

Preventing hot linking of images and other file types

In the webmaster community, “hot linking” is a curse phrase. Also known as “bandwidth stealing” by the angry site owner, it refers to linking directly to non-html objects not on one own’s server, such as images, .js files etc. The victim’s server in this case is robbed of bandwidth (and in turn money) as the violator enjoys showing content without having to pay for its deliverance. The most common practice of hot linking pertains to another site’s images.

Using .htaccess, you can disallow hot linking on your server, so those attempting to link to an image or CSS file on your site, for example, is either blocked (failed request, such as a broken image) or served a different content (ie: an image of an angry man) . Note that mod_rewrite needs to be enabled on your server in order for this aspect of .htaccess to work. Inquire your web hostregarding this.

With all the pieces in place, here’s how to disable hot linking of certain file types on your site, in the case below, images, JavaScript (js) and CSS (css) files on your site. Simply add the below code to your .htaccess file, and upload the file either to your root directory, or a particular subdirectory to localize the effect to just one section of your site:

RewriteEngine on

RewriteCond %{HTTP_REFERER} !^$

RewriteCond %{HTTP_REFERER} !^http://(www\.)?mydomain.com/.*$ [NC]

RewriteRule \.(gif|jpg|js|css)$ - [F]

Be sure to replace “mydomain.com” with your own. The above code creates a failed request when hot linking of the specified file types occurs. In the case of images, a broken image is shown instead.

Serving alternate content when hot linking is detected

You can set up your .htaccess file to actually serve up different content when hot linking occurs. This is more commonly done with images, such as serving up an Angry Man image in place of the hot linked one. The code for this is:

RewriteEngine on

RewriteCond %{HTTP_REFERER} !^$

RewriteCond %{HTTP_REFERER} !^http://(www\.)?mydomain.com/.*$ [NC]

RewriteRule \.(gif|jpg)$ http://www.mydomain.com/angryman.gif [R,L]

Same deal- replace mydomain.com with your own, plus angryman.gif.

Time to pour a bucket of cold water on hot linking!

Preventing Directory Listing

Do you have a directory full of images or zips that you do not want people to be able to browse through? Typically a server is setup to prevent directory listing, but sometimes they are not. If not, become self-sufficient and fix it yourself:

IndexIgnore *

The * is a wildcard that matches all files, so if you stick that line into an htaccess file in your images directory, nothing in that directory will be allowed to be listed.

On the other hand, what if you did want the directory contents to be listed, but only if they were HTML pages and not images? Simple says I:

IndexIgnore *.gif *.jpg

This would return a list of all files not ending in .jpg or .gif, but would still list .txt, .html, etc.

And conversely, if your server is setup to prevent directory listing, but you want to list the directories by default, you could simply throw this into an htaccess file the directory you want displayed:

Options +Indexes

If you do use this option, be very careful that you do not put any unintentional or compromising files in this directory. And if you guessed it by the plus sign before Indexes, you can throw in a minus sign (Options -Indexes) to prevent directory listing entirely–this is typical of most server setups and is usually configured elsewhere in the apache server, but can be overridden through htaccess.

If you really want to be tricky, using the +Indexes option, you can include a default description for the directory listing that is displayed when you use it by placing a file called HEADER in the same directory. The contents of this file will be printed out before the list of directory contents is listed. You can also specify a footer, though it is called README, by placing it in the same directory as the HEADER. The README file is printed out after the directory listing is printed.

Typically servers are setup to prevent directory listing, but often they aren’t. If you have a directory full of downloads or images that you don’t want people to be able to browse through, add the following line to your .htaccess file…

IndexIgnore *

The * matches all files. If, for example, you want to prevent only listing of images, use…

IndexIgnore *.gif *.jpg

Alternative Index Files

You may not always want to use index.htm or index.html as your index file for a directory, for example if you are using PHP files in your site, you may want index.php to be the index file for a directory. You are not limited to ‘index’ files though. Using .htaccess you can set foofoo.blah to be your index file if you want to!

Alternate index files are entered in a list. The server will work from left to right, checking to see if each file exists, if none of them exisit it will display a directory listing (unless, of course, you have turned this off).

DirectoryIndex index.php index.php3 messagebrd.pl index.html index.htm

Password Protection with .htaccess

Although there are many uses of the .htaccess file, by far the most popular, and probably most useful, is being able to relaibly password protect directories on websites. Although JavaScript etc. can also be used to do this, only .htaccess has total security (as someone must know the password to get into the directory, there are no ‘back doors’)

The .htaccess File

Adding password protection to a directory using .htaccess takes two stages. The first part is to add the appropriate lines to your .htaccess file in the directory you would like to protect. Everything below this directory will be password protected:

AuthName “Section Name”

AuthType Basic

AuthUserFile /full/path/to/.htpasswd

Require valid-user

There are a few parts of this which you will need to change for your site. You should replace “Section Name” with the name of the part of the site you are protecting e.g. “Members Area”.

The /full/parth/to/.htpasswd should be changed to reflect the full server path to the .htpasswd file (more on this later). If you do not know what the full path to your webspace is, contact your system administrator for details.

The .htpasswd File

Password protecting a directory takes a little more work than any of the other .htaccess functions because you must also create a file to contain the usernames and passwords which are allowed to access the site. These should be placed in a file which (by default) should be called .htpasswd. Like the .htaccess file, this is a file with no name and an 8 letter extension. This can be placed anywhere within you website (as the passwords are encrypted) but it is advisable to store it outside the web root so that it is impossible to access it from the web.

Entering Usernames And Passwords

Once you have created your .htpasswd file (you can do this in a standard text editor) you must enter the usernames and passwords to access the site. They should be entered as follows:

username:password

where the password is the encrypted format of the password. To encrypt the password you will either need to use one of the premade scripts available on the web or write your own. There is a good username/password service at the KxS site which will allow you to enter the user name and password and will output it in the correct format.

For multiple users, just add extra lines to your .htpasswd file in the same format as the first. There are even scripts available for free which will manage the .htpasswd file and will allow automatic adding/removing of users etc.

Accessing The Site

When you try to access a site which has been protected by .htaccess your browser will pop up a standard username/password dialog box. If you don’t like this, there are certain scripts available which allow you to embed a username/password box in a website to do the authentication. You can also send the username and password (unencrypted) in the URL as follows:

http://username:password@www.website.com/directory/

Save bandwidth with .htaccess!

If you pay for your bandwidth, this wee line could save you hard cash..

Save me hard cash! and help the internet!

<ifModule mod_php4.c>

php_value zlib.output_compression 16386

</ifModule>

All it does is enables PHP’s built-in transparent zlib compression. This will half your bandwidth usage in one stroke, more than that, in fact. Of course it only works with data being output by the PHP module, but if you design your pages with this in mind, you can use php echo statements, or better yet, php “includes” for your plain html output and just compress everything!Remember, if you run phpsuexec, you’ll need to put php directives in a local php.ini file, not .htaccess. See here for more details.

“Bandwidth stealing,” also known as “hot linking,” is linking directly to non-html objects on another server, such as images, electronic books etc. The most common practice of hot linking pertains to another site’s images.

To disallow hot linking on your server, create the following .htaccess file and upload it to the folder that contains the images you wish to protect…

RewriteEngine on

RewriteCond %{HTTP_REFERER} !^$

RewriteCond %{HTTP_REFERER} !^http://(www\.)?YourSite\.com/.*$ [NC]

RewriteRule \.(gif|jpg)$ – [F]

Replace “YourSite.com” with your own. The above code causes a broken image to be displayed when it’s hot linked. If you’d like to display an alternate image in place of the hot linked one, replace the last line with…

RewriteRule \.(gif|jpg)$ http://www.YourSite.com/stop.gif [R,L]

Replace “YourSite.com” and stop.gif with your real names.

Hide and deny files..

Do you remember I mentioned that any file beginning with .ht is invisible? ..”almost every web server in the world is configured to ignore them, by default” and that is, of course, because .ht_anything files generally have server directives and passwords and stuff in them, most servers will have something like this in their main configuration..

Standard setting..

<Files ~ “^\.ht”>

Order allow,deny

Deny from all

Satisfy All

</Files>

which instructs the server to deny access to any file beginning with .ht, effectively protecting our .htaccess and other files. The “.” at the start prevents them being displayed in an index, and the .ht prevents them being accessed. This version..

ignore what you want

<Files ~ “^.*\.([Ll][Oo][Gg])”>

Order allow,deny

Deny from all

Satisfy All

</Files>

tells the server to deny access to *.log files. You can insert multiple file types into each rule, separating them with a pipe “|”, and you can insert multiple blocks into your .htaccess file, too. I find it convenient to put all the files starting with a dot into one, and the files with denied extensions into another, something like this..

the whole lot

# deny all .htaccess, .DS_Store $hî†é and ._* (resource fork) files

<Files ~ “^\.([Hh][Tt]|[Dd][Ss]_[Ss]|[_])”>

Order allow,deny

Deny from all

Satisfy All

</Files>

# deny access to all .log and .comment files

<Files ~ “^.*\.([Ll][Oo][Gg]|[cC][oO][mM][mM][eE][nN][tT])”>

Order allow,deny

Deny from all

Satisfy All

</Files>

would cover all ._* resource fork files, .DS_Store files (which the Mac Finder creates all over the place) *.log files, *.comment files and of course, our .ht* files. You can add whatever file types you need to protect from direct access. I think it’s clear now why the file is called “.htaccess”.

Disable directory listings

Preventing directory listings can be very useful if for example, you have a directory containing important ‘.zip’ archive files or to prevent viewing of your image directories. Alternatively it can also be useful to enable directory listings if they are not available on your server, for example if you wish to display directory listings of your important ‘.zip’ files.

To prevent directory listings, create a .htaccess file following the main instructions and guidance which includes the following text:

IndexIgnore *

The above lines tell the Apache Web Server to prevent directory listings of directories and files within the directory containing the .htaccess file. The ‘*’ represents a wildcard, this means it will not display any files. It is possible to prevent listings of only certain file types, so for example you can show listings of ‘.html’ files but not your ‘.zip’ files.

To prevent listing ‘.zip’ files, create a .htaccess file following the main instructions and guidance which includes the following text:

IndexIgnore *.zip

The above line tells the Apache Web Server to list all files except those that end with ‘.zip’.

To prevent listing multiple file types, create a .htaccess file following the main instructions and guidance which includes the following text:

| IndexIgnore *.zip *.jpg *.gif |

|

The above line tells the Apache Web Server to list all files except those that end with ‘.zip’, ‘.jpg’ or ‘.gif’.

Alternatively, if your server does not allow directory listings and you would like to enable them, create a .htaccess file following the main instructions and guidance which includes the following text:

Options +Indexes

The above line tells the Apache Web Server to enable directory listing within the directory containing this .htaccess file. You can also reverse this to disable directory listings by replacing the plus sign before the text ‘Indexes’ with a minus sign. e.g. ‘Options -Indexes’.

You can also include a default description for the directory listings that is displayed at the top of the page by placing a file called ‘HEADER’ in the same directory. The contents of this file are displayed before the list of directory contents. You can also include a footer, by creating a file called ‘README’. The contents of this file are displayed after the list of directory contents.

Hot link prevention techniques

Hot link prevention refers to stopping web sites that are not your own from displaying your files or content, e.g. stopping visitors from other web sites. This is most commonly used to prevent other web sites from displaying your images but it can be used to prevent people using your JavaScript or CSS (cascading style sheet) files. The problem with hot linking is it uses your bandwidth, which in turn costs money, hot linking is often referred to as ‘bandwidth theft’.

Using .htaccess we can prevent other web sites from sourcing your content, and can even display different content in turn. For example, it is common to display what is referred to as an ‘angry man’ images instead of the desired images.

Note, this functionality requires that ‘mod_rewrite’ is enabled on your server. Due to the demands that can be placed on system resources, it is unlikely it is enabled so be sure to check with your system administrator or web hosting company.

To set-up hot link prevention for ‘.gif’, ‘.jpg’ and ‘.css’ files, create a .htaccess file following the main instructions and guidance which includes the following text:

The above lines tell the Apache Web Server to block all links to ‘.gif’, ‘.jpg’ and ‘.css’ files which are not from the domain name ‘http://www.yourdomain.com/‘. Before uploading your .htaccess file ensure you replace ‘yourdomain.com’ with the appropriate web site address.

To set-up hot link prevention for ‘.gif’, ‘.jpg’ files which displays alternate content (such as an angry man image), create a .htaccess file following the main instructions and guidance which includes the following text:

The above lines tell the Apache Web Server to block all links to ‘.gif’ and ‘.jpg’ files which are not from the domain name ‘http://www.yourdomain.com/‘ and to display the file ‘http://www.yourdomain.com/hotlink.jpg‘ instead. Before uploading your .htaccess file ensure you replace ‘yourdomain.com’ with the appropriate web site address.

Protecting your images and (zip) files from linking

Module: mod_rewrite

Put a file named .htaccess in the directory where you have the images located.

AuthUserFile /dev/null

AuthGroupFile /dev/null

RewriteEngine On

RewriteCond %{HTTP_REFERER} !^http://www.widexl.com.* [NC]

RewriteCond %{HTTP_REFERER} !^http://ma.widexl.com.* [NC]

RewriteCond %{HTTP_REFERER} !^http://members.widexl.com.* [NC]

RewriteCond %{HTTP_REFERER} !^http://widexl.com.* [NC]

RewriteCond %{HTTP_REFERER} !^http://212.204.218.80.* [NC]

RewriteRule /* http://widexl.com/index.html [R,L]

By the RewriteCond change the web address name for who are allowed to use your images.

By the RewriteRule change the web address name where to send the ones who are linking to.

Note: You need to write for every web address (hostname) a new line.

Remember: http://widexl.com is not the same like http://www.widexl.com

Reference:

http://www.javascriptkit.com/howto/htaccess14.shtml

http://www.freewebmasterhelp.com/tutorials/htaccess/

http://www.htaccesstutorial.net/

http://www.askapache.com/htaccess/apache-htaccess.html

http://corz.org/serv/tricks/htaccess.php

http://www.askapache.com/htaccess/apache-htaccess.html

http://www.htaccess-guide.com/index.php?a=1

http://www.askapache.com/htaccess/apache-htaccess.html

http://www.askapache.com/htaccess/apache-htaccess.html#htaccess-code-examples

http://httpd.apache.org/docs/

Apache Tutorial: .htaccess Files – Official Apache documentation and guidelines.

Apache Directives – A list of directives available in the standard Apache distribution.

Apache Documentation – Main Apache Web Server documentation.

HotScripts.com – User management resources.

The CGI Resource Index – Password protection resources.

CGI-Index.com – Security resources.

DirectoryPass – DirectoryPass is a very powerful, yet simple to use .htaccess management system.

Locked Area – Locked Area is a highly sophisticated password protection and membership management system written in Perl.

OpenCrypt – OpenCrypt is a fully automated and self-managing membership/user management system which is more than capable of the most complex multi-domain installations, whilst still being usable in the most simple of circumstances.

Tagged : Apache / Apache HTACCESS Guide / Apache HTACCESS Tutorial / HTACCESS