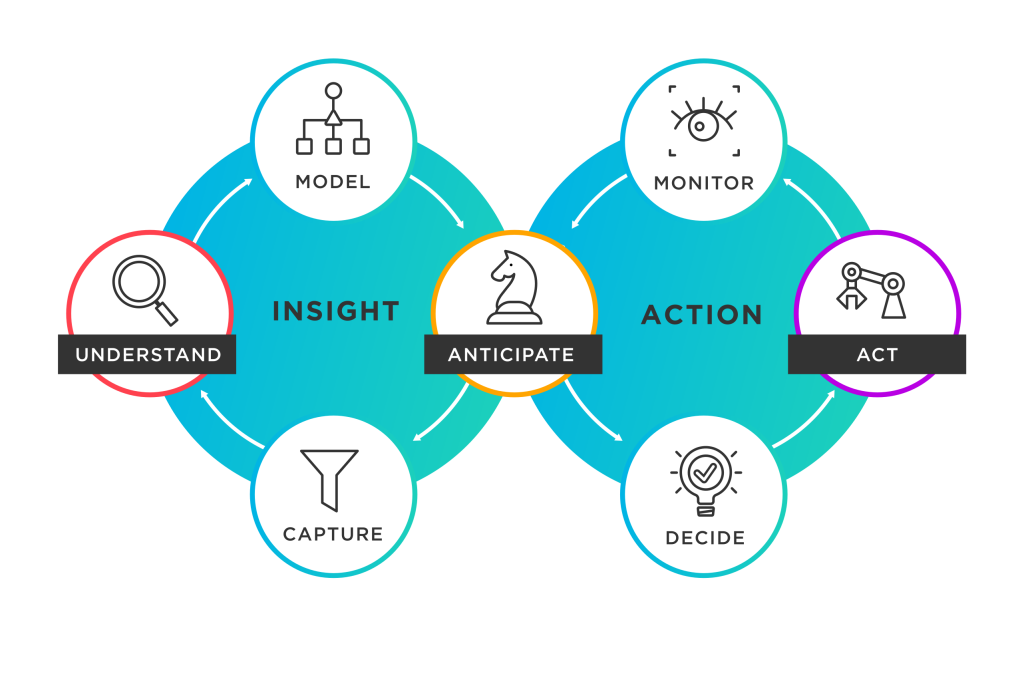

Decision Management Systems (DMS) are software platforms or frameworks that facilitate the management, automation, and optimization of business decisions. These systems typically incorporate business rules management, analytics, and decision modeling capabilities to enable organizations to make informed and consistent decisions. DMS can be used across various industries and business functions, including finance, healthcare, customer service, supply chain management, and more.

Here are 10 popular Decision Management Systems (DMS):

- IBM Operational Decision Manager

- FICO Decision Management Suite

- SAS Decision Manager

- Oracle Business Rules

- Pega Decision Management

- TIBCO BusinessEvents

- Red Hat Decision Manager

- SAP Decision Service Management

- OpenRules

- Drools

1. IBM Operational Decision Manager:

IBM’s DMS provides a comprehensive platform for modeling, automating, and optimizing business decisions. It combines business rules management, predictive analytics, and optimization techniques.

Key features:

- Business Rules Management: IBM ODM offers a powerful business rules management system (BRMS) that allows organizations to define, manage, and govern business rules. It provides a user-friendly interface for business analysts to author and update rules without the need for coding.

- Decision Modeling: ODM includes decision modeling capabilities that enable organizations to model and visualize their decision logic using decision tables, decision trees, and decision flowcharts. This makes it easier to understand and communicate complex decision-making processes.

- Decision Validation and Testing: ODM provides tools for validating and testing decision models and business rules. Users can simulate different scenarios, analyze rule conflicts or inconsistencies, and verify the accuracy and completeness of their decision logic.

2. FICO Decision Management Suite:

FICO’s DMS offers a suite of tools for decision modeling, optimization, and rules management. It enables organizations to automate and improve decision-making processes using advanced analytics.

Key features:

- Decision Modeling and Strategy Design: The suite provides a visual decision modeling environment that allows business analysts and domain experts to define and document decision logic using decision tables, decision trees, and decision flows. It enables the creation of reusable decision models and strategies.

- Business Rules Management: FICO Decision Management Suite includes a powerful business rules engine that allows organizations to define, manage, and execute complex business rules. It provides a user-friendly interface for managing rule sets, rule versioning, and rule governance.

- Analytics Integration: The suite integrates with advanced analytics capabilities, including predictive modeling, machine learning, and optimization techniques. This enables organizations to leverage data-driven insights to enhance decision-making and optimize outcomes.

3. SAS Decision Manager:

SAS Decision Manager is a comprehensive platform that allows organizations to model, automate, and monitor decision processes. It provides a visual interface for creating and deploying rules and decision flows.

Key features:

- Decision Modeling: SAS Decision Manager allows users to model and visualize decision logic using graphical interfaces and decision tables. It provides a user-friendly environment for business analysts and domain experts to define decision rules and dependencies.

- Business Rules Management: The platform offers a powerful business rules management system (BRMS) that enables organizations to define, manage, and govern business rules. It supports the creation and management of rule sets, rule libraries, and rule versioning.

- Decision Automation: SAS Decision Manager enables the automation of decision processes. It allows for the execution of decision logic within operational systems and workflows, reducing manual effort and ensuring consistent and timely decision-making.

4. Oracle Business Rules:

Oracle Business Rules provides a platform for modeling, automating, and managing business rules. It integrates with other Oracle products and offers a range of features for decision management.

Key features:

- Rule Authoring and Management: Oracle Business Rules offers a user-friendly interface for defining, authoring, and managing business rules. It provides a graphical rule editor that allows business users and subject matter experts to define rules using a visual representation.

- Decision Modeling: The platform supports decision modeling using decision tables, decision trees, and other visual representations. It enables users to define decision logic and dependencies in a structured and intuitive manner.

- Rule Repository and Versioning: Oracle Business Rules includes a rule repository that allows for the storage, organization, and versioning of rules. It provides a centralized location to manage and govern rules, ensuring consistency and traceability.

5. Pega Decision Management:

Pega Decision Management is part of Pega’s unified platform for business process management and customer engagement. It provides tools for designing, executing, and optimizing business decisions.

Key features:

- Decision Modeling: Pega Decision Management allows users to model and visualize decision logic using decision tables, decision trees, and other visual representations. It provides a user-friendly interface for business users and domain experts to define and manage decision rules.

- Business Rules Management: The platform includes a powerful business rules engine that enables organizations to define, manage, and govern business rules. It supports the creation and management of rule sets, rule libraries, and rule versioning.

- Decision Strategy Design: Pega Decision Management provides tools for designing decision strategies. It allows users to define and orchestrate a series of decisions, actions, and treatments to optimize customer interactions and outcomes.

6. TIBCO BusinessEvents:

TIBCO BusinessEvents is a complex event processing platform that enables organizations to make real-time decisions based on streaming data and business rules. It offers high-performance event processing and decision automation capabilities.

Key features:

- Event Processing: TIBCO BusinessEvents provides powerful event processing capabilities that allow organizations to detect, analyze, and correlate events in real-time. It can handle high volumes of events from multiple sources and process them with low latency.

- Complex Event Processing (CEP): The platform supports complex event processing, which involves analyzing and correlating multiple events to identify patterns, trends, and anomalies. It enables organizations to gain insights from event data and take appropriate actions in real-time.

- Business Rules and Decision Management: TIBCO BusinessEvents incorporates a business rules engine that allows organizations to define, manage, and execute business rules. It enables the automation of decision-making processes based on real-time event data.

7. Red Hat Decision Manager:

Red Hat Decision Manager is an open-source decision management platform that combines business rules management, complex event processing, and predictive analytics. It provides tools for building and managing decision services.

Key features:

- Business Rules Management: Red Hat Decision Manager offers a powerful business rules engine that allows organizations to define, manage, and execute business rules. It provides a user-friendly interface for business users and domain experts to author and maintain rules.

- Decision Modeling: The platform supports decision modeling using decision tables, decision trees, and other visual representations. It allows users to model and visualize decision logic in a structured and intuitive manner.

- Decision Services and Execution: Red Hat Decision Manager enables the deployment of decision services as reusable components that can be integrated into operational systems and workflows. It supports real-time or near-real-time decision execution within existing applications.

8. SAP Decision Service Management:

SAP Decision Service Management is a component of SAP’s business process management suite. It allows organizations to model, execute, and monitor decision services based on business rules.

Key features:

- Business Rules Engine: SAP decision management solutions typically include a business rules engine that allows organizations to define and manage their business rules. This engine enables the execution of rules in real time or as part of automated processes.

- Decision Modeling and Visualization: These solutions often provide tools for decision modeling and visualization, allowing business users and analysts to design decision logic using graphical interfaces, decision tables, or other visual representations.

- Decision Automation: SAP decision management solutions support the automation of decision-making processes. This involves integrating decision services into operational systems and workflows, enabling consistent and automated decision execution.

9. OpenRules:

OpenRules is an open-source decision management platform that focuses on business rules management. It provides a lightweight and flexible solution for modeling and executing business rules.

Key features:

- Rule Authoring and Management: OpenRules offers a user-friendly and intuitive rule authoring environment. It provides a spreadsheet-based interface, allowing business users and subject matter experts to define and maintain rules using familiar spreadsheet tools such as Microsoft Excel or Google Sheets.

- Rule Execution Engine: OpenRules includes a powerful rule execution engine that evaluates and executes business rules. It supports both forward and backward chaining rule execution, allowing complex rule dependencies and reasoning to be handled effectively.

- Decision Modeling and Visualization: The platform supports decision modeling using decision tables, decision trees, and other visual representations. It enables users to model and visualize decision logic in a structured and easy-to-understand manner.

10. Drools:

Drools is an open-source business rules management system that enables organizations to model, validate, and execute business rules. It offers a rich set of features and integrates well with other systems.

Key features:

- Rule Authoring and Management: Drools offers a rich set of tools and editors for authoring and managing business rules. It provides a domain-specific language (DSL) and a graphical rule editor, allowing both business users and developers to define and maintain rules effectively.

- Rule Execution Engine: Drools includes a highly efficient and scalable rule execution engine. It supports forward chaining, backward chaining, and hybrid rule execution strategies, allowing complex rule dependencies and reasoning to be handled efficiently.

- Decision Modeling and Visualization: The platform supports decision modeling using decision tables, decision trees, and other visual representations. It allows users to model and visualize decision logic in a structured and intuitive manner.